A few weeks ago, I was in a group of software engineers talking about AI workflows for enterprise software. The conclusion was simple: “If you want to succeed, build a Claude Skill and integrate with Claude Code. Better tools will win.”

I’ve heard variations of this idea everywhere: from big tech to startups to solo founders. Successful AI, the thinking goes, means better integrating the tools we already use with a centralized platform and extending them to cover more use cases.

This sounds right, but it’s incomplete.

AI coding tools on personal machines are powerful, but organizations depend on collaborate work that can be seen, shared, and carried forward. Real workflows span teams, require auditability, and need to produce legible progress for others. This is broader than software engineering alone. The real challenge is supporting the many job functions where workflows matter and AI adoption is still early.

AI Tool Blindness is the tendency to assume that better tools will win, based on an extrapolation of what we already use. It ignores the constraints of what a real solution requires. This pattern shows up across roles, where anyone is proposing solutions based on the tools they understand, rather than starting from first principles.

From history, we know that better tools fail to win out when it comes to scaling to an enterprise or organizational level. Slack was positioned as better way for teams to talk to each other and email replacement. Slack (and Teams) are now industry mainstays, but systems like email they were meant to replace still linger. Searching for Slack messages will never be great because ephemeral chat as a system of record is a compromised premise.

Git is miles ahead of SVN when it was released, but it wasn’t that better enough to justify replacement. What made git finally take off wasn’t git with better integrations, but a platform version of git that could host collaborative features. This is GitHub: no PGP keys, one click repo creation, and pull requests from developers all over the globe.

Early on, I saw AI work best for small, contained coding projects, like creating database queries in a new syntax. I’ve also built greenfield MVPs that were impressive alternatives to existing workflows, and created a side project for quantifying uncertain in portfolio returns. Even in large and complex repos, it’s possible to crush well-scoped tickets when the problem was clear, the coding task was straightforward, and the end condition was to get a teammate to approve the PR and rollup into a release you don’t own. AI is effective when the constraint is limited to code modification.

I’ve also seen when AI fail to make something substantially easier. I was asked to make a specific product improvement based on a clear signal of customer pain. At first, it was easy enough to develop a feature list, have AI come up with an integration plan, and present the results. Things slowed down the moment the work started to cross team boundaries. Changes needed buy-in, buy-in requires presentations, and alignment with codeowners who have their own incentives. Even once the PR is code complete, approvals can drag, and the release process can be even slower. The difficulty wasn’t writing code or making sure it was right, it was deciding what should happen next, and who is responsible for it. That’s not a coding problem. It’s an organizational one.

Another place I’ve seen this outside of software engineering. A recent discussion on AI tools for investing described the same issue: most agents treat a deliverable like a spreadsheet or report as the end goal. But in real workflows, that’s just the beginning. The work evolves over time, across sessions, as new information comes in and decisions are made. This points to a deeper limitation: most AI tools are session-based. They optimize for producing an answer, not maintaining shared state.

Organizations don’t operate in sessions. They operate on persistent, shared artifacts that exist and co-evolve across the boundary of a team that can be retrieved later. AI workflow need to operate on this level.

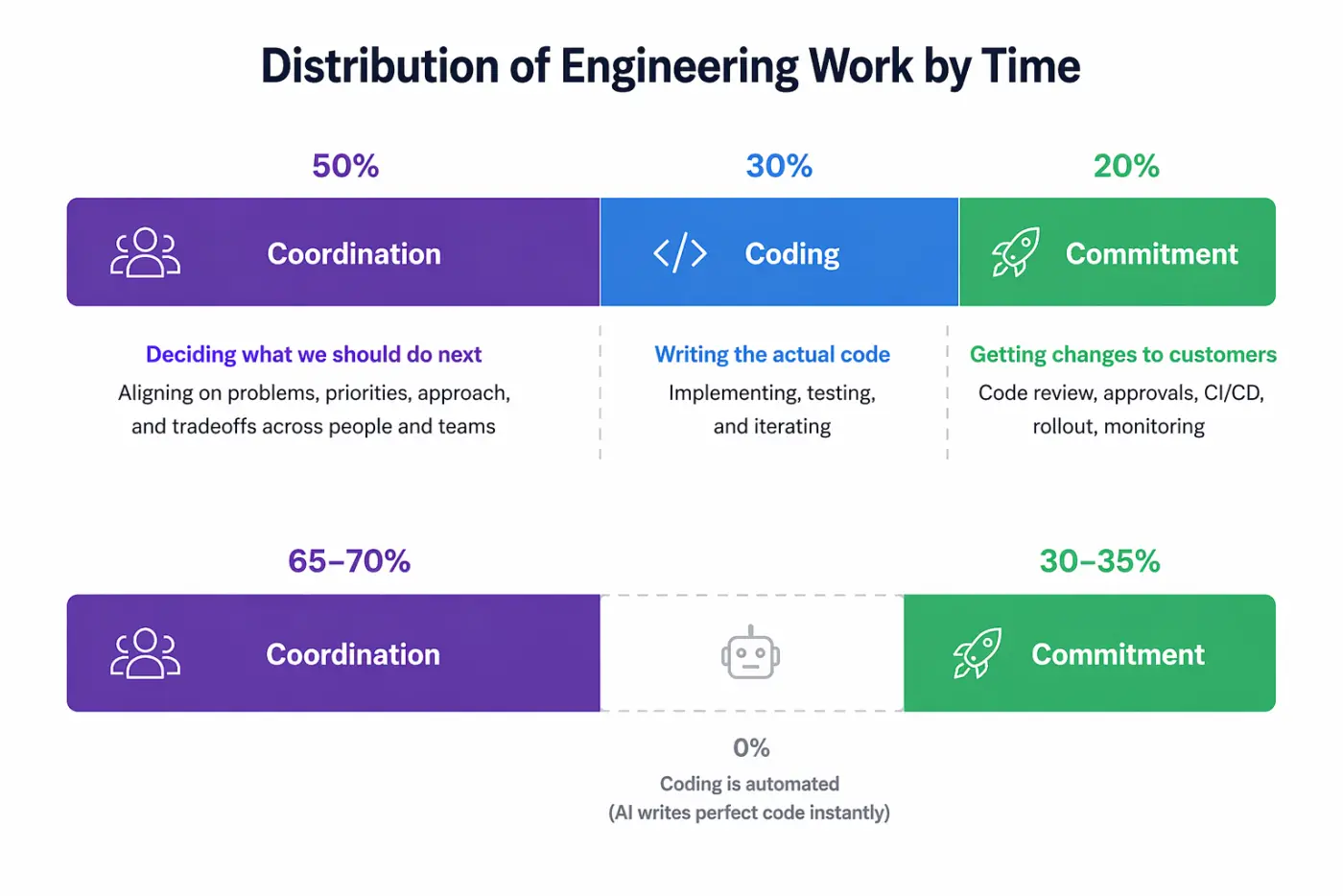

For software engineering tasks, one way to think about how long something takes, and where the constraint is, is by thinking about the “task distribution by time”.

- Coordination: deciding what we should do next

- Coding: writing the actual code

- Commitment: getting that coding change into production and in front of customers

In the past three years as a Senior Engineer and tech lead at both startups and big tech, I’ve probably spent 50% on coordination, 30% on coding, and another 20% on getting those PRs approved and doing the rollout. Talking to other engineers, it’s relatively rare for seniors to spend more than 50% of their time writing code. Survey data has suggested for years that developers spend far less time writing code than people assume. SonarSource reported in 2019 that developers spend about 32% of the workweek “actually writing or improving code.”1

Writing code is critical for software engineers, but it’s not the constraint. Even if agents could write correct code instantly, we’d be code owners sitting at an organizational intersection point, where the next layer of problems is unsolved by CLI tools.

This is why you can’t build strategy by solely looking at the tools you have available and expecting them to work within a new system. For AI workflows we need to target the collaboration surface between individuals. That might look like Claude Code or Codex, but it will need to account for governance, security, and compliance. Along with all the features required to work across software teams, get feedback on documents, and share proposals for review. This system hasn’t been fully realized, folks are trying, but it won’t be jamming more features into software tools for non-software use cases. Faster horses are great, but you can’t park one in the city, nor commute 30 minutes in an hour twice a day.

Conclusion

The software engineers I’ve talked to aren’t wrong. Better tools matter, but tools don’t decide what gets done. Systems do. When it comes to integrating AI into real workflows, the target isn’t the individual. And if AI is going to matter beyond demos, it won’t be because we built better tools. It will be because we embedded them where organizations actually operate.

We need to build cars.

Footnotes

https://www.sonarsource.com/blog/how-much-time-do-developers-spend-actually-writing-code↩︎